Resource Management for Distributed Binary Neural Networks in Programmable Data Plane

IEEE/IFIP Network Operations and Management Symposium (NOMS) 2026

Rome, Italy- 18 – 22 May 2026

[PDF]

Fatemeh Babaei (Sharif University of Technology), Mahdi Dolati (Sharif University of Technology), Mojtaba Mozhganfar (University of Tehran), Sina Darabi (Università della Svizzera Italiana), Farzad Tashtarian (University of Klagenfurt)

Abstract

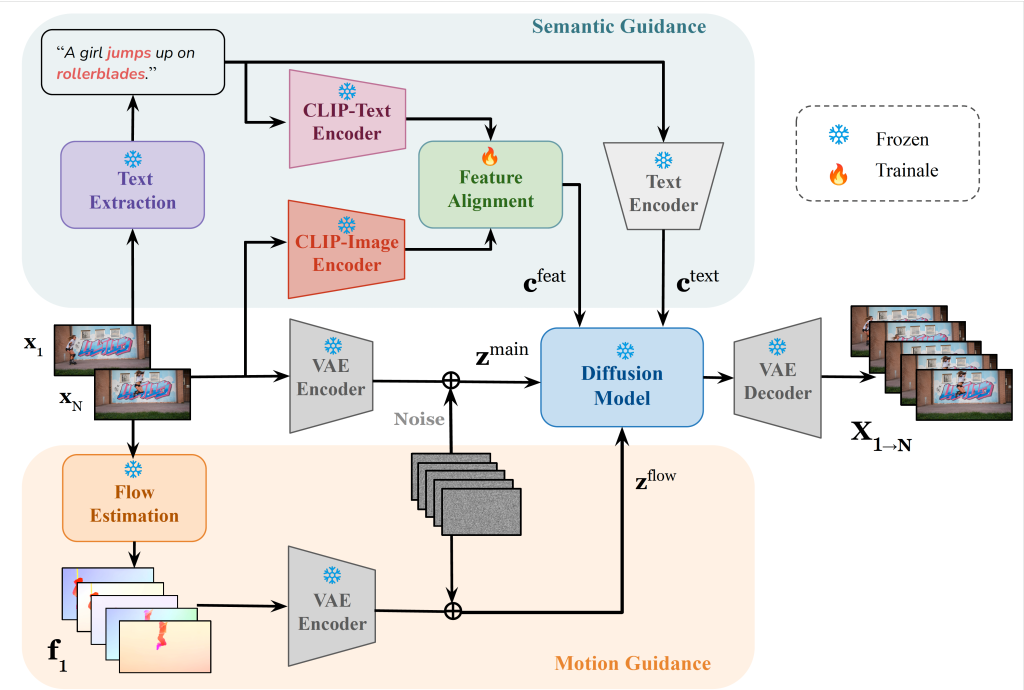

Programmable networks enable the deployment of customized network functions that can process traffic at line rate. The growing traffic volume and the increasing complexity of network management have motivated the use of data-driven and machine learning–based functions within the network. Recent studies demonstrate that machine learning models can be fully executed in the data plane to achieve low latency. However, the limited hardware resources of programmable switches pose a significant challenge for deploying such functions. This work investigates Binary Neural Networks (BNNs) as an effective mechanism for implementing network functions entirely in the data plane. We propose a network-wide resource allocation algorithm that exploits the inherent distributability of neural networks across multiple switches. The algorithm builds on the linear programming relaxation and randomized rounding framework to achieve efficient resource utilization. We implement our approach using Mininet and bmv2 software switches. Comprehensive evaluations on two public datasets show that our method attains near-optimal performance in small-scale networks and consistently outperforms baseline schemes in larger deployments.