YTLive: A Dataset of Real-World YouTube Live Streaming Sessions

IEEE/IFIP Network Operations and Management Symposium (NOMS) 2026

Rome, Italy- 18 – 22 May 2026

[PDF]

Abstract

IEEE/IFIP Network Operations and Management Symposium (NOMS) 2026

Rome, Italy- 18 – 22 May 2026

[PDF]

Abstract

IEEE/IFIP Network Operations and Management Symposium (NOMS) 2026

Rome, Italy- 18 – 22 May 2026

[PDF]

Fatemeh Babaei (Sharif University of Technology), Mahdi Dolati (Sharif University of Technology), Mojtaba Mozhganfar (University of Tehran), Sina Darabi (Università della Svizzera Italiana), Farzad Tashtarian (University of Klagenfurt)

Abstract

Programmable networks enable the deployment of customized network functions that can process traffic at line rate. The growing traffic volume and the increasing complexity of network management have motivated the use of data-driven and machine learning–based functions within the network. Recent studies demonstrate that machine learning models can be fully executed in the data plane to achieve low latency. However, the limited hardware resources of programmable switches pose a significant challenge for deploying such functions. This work investigates Binary Neural Networks (BNNs) as an effective mechanism for implementing network functions entirely in the data plane. We propose a network-wide resource allocation algorithm that exploits the inherent distributability of neural networks across multiple switches. The algorithm builds on the linear programming relaxation and randomized rounding framework to achieve efficient resource utilization. We implement our approach using Mininet and bmv2 software switches. Comprehensive evaluations on two public datasets show that our method attains near-optimal performance in small-scale networks and consistently outperforms baseline schemes in larger deployments.

IEEE/IFIP Network Operations and Management Symposium (NOMS) 2026

Rome, Italy- 18 – 22 May 2026

[PDF]

Mojtaba Mozhganfar (University of Tehran), Masoumeh Khodarahmi (IMDEA), Daniele Lorenzi (Bitmovin), Mahdi Dolati (Sharif University of Technology), Farzad Tashtarian (Alpen-Adria-Universität Klagenfurt), Ahmad Khonsari (University of Tehran), Christian Timmerer (Alpen-Adria-Universität Klagenfurt)

Abstract

Volumetric video streaming enables six degrees of freedom (6DoF) interaction, allowing users to navigate freely within immersive 3D environments. Despite notable advancements, volumetric video remains an emerging field, presenting ongoing challenges and vast opportunities in content capture, compression, transmission, decompression, rendering, and display. As user expectations grow, delivering high Quality of Experience (QoE) in these systems becomes increasingly critical due to the complexity of volumetric content and the demands of interactive streaming. This paper reviews recent progress in QoE for volumetric streaming, beginning with an overview of QoE evaluation of volumetric video streaming studies, including subjective assessments tailored to 6DoF content. The core focus of this work is on objective QoE modeling, where we analyze existing models based on their input factors and methodological strategies. Finally, we discuss the key challenges and promising research directions for building perceptually accurate and adaptable QoE models that can support the future of immersive volumetric media.

[PDF]

Wei Zhou, Yixiao Li, Hadi Amirpour, Xiaoshuai Hao, Jiang Liu, Peng Wang, Hantao Liu

Abstract: Single image super-resolution (SR) has achieved remarkable progress with deep learning, yet most approaches rely on distortion-oriented losses or heuristic perceptual priors, which often lead to a trade-off between fidelity and visual quality. To address this issue, we propose an \textbf{Efficient Perceptual Bi-directional Attention Network (Efficient-PBAN)} that explicitly optimizes SR towards human-preferred quality. The proposed framework is trained on a newly constructed SR quality dataset that covers a wide range of state-of-the-art SR methods with corresponding human opinion scores. Using this dataset, Efficient-PBAN learns to predict perceptual quality in a way that correlates strongly with subjective judgments. The learned metric is further integrated into SR training as a differentiable perceptual loss, enabling closed-loop alignment between reconstruction and perceptual assessment. Extensive experiments demonstrate that our approach delivers superior perceptual quality.

[PDF]

MohammadAli Hamidi, Hadi Amirpour, Christian Timmerer, Luigi Atzori

Abstract: Filter-altered images are increasingly prevalent in online visual communication, particularly on social media platforms. Assessing the relevant perceived quality is essential for effectively managing visual communication. However, the perceived quality is content-dependent and non-monotonic, posing challenges for distortion-centric Image Quality Assessment (IQA) models. The Image Manipulation Quality Assessment (IMQA) benchmark addressed this gap with a dual-stream baseline that fuses filter-aware and quality-aware encoders via an MS-CAM attention module. However, only eight of the ten dataset folds are publicly released, making the task more data-constrained than the original 10-fold protocol. To overcome this limitation, we propose ProgressIQA, a data-efficient framework that integrates ensemble self-training, label distribution stratification, and multi-stage progressive curriculum learning. Fold-specific models are ensembled to generate stable teacher predictions, which are used as pseudo-labels for external filter-augmented images. These pseudo-labels are then balanced through stratified sampling and combined with the original data in a progressive curriculum that transfers knowledge from coarse to fine resolution across stages. Under the restricted 8-fold protocol, ProgressIQA achieves PLCC 0.7082 / SROCC 0.7107, outperforming the IMQA baseline (0.5616 / 0.5486) and even surpassing the original 10-fold evaluation in SROCC (0.7253 / 0.6870).

[PDF]

Hadi Amirpour, MohammadAli Hamidi, Wei Zhou, Luigi Atzori, Christian Timmerer

Abstract: Live video broadcasting has become widely accessible through popular platforms such as Instagram, Facebook, and YouTube, enabling real-time content sharing and user interaction. While the Quality of Experience (QoE) has been extensively studied for Video-on-Demand (VoD) services, the QoE of live broadcast videos remains relatively underexplored. In this paper, we address this gap by proposing a novel machine learning–based model for QoE prediction in live video broadcasting scenarios. Our approach, BiNR, introduces two models: BiNR_fast, which uses only bitstream features for ultra-fast QoE predictions, and the full model BiNR_full, which integrates bitstream features with a pixel-based no-reference (NR) quality metric that works on the decoded signal.

We evaluate multiple regression models to predict subjective QoE scores and further conduct feature importance analysis. Experimental results show that our full model achieves a Pearson Correlation Coefficient (PCC)/Spearman Rank Correlation Coefficient (SRCC) of 0.92/0.92 with subjective scores, significantly outperforming the state-of-the-art methods.

[PDF]

Yiying Wei (AAU, Austria), Hadi Amirpour (AAU, Austria), and Christian Timmerer (AAU, Austria)

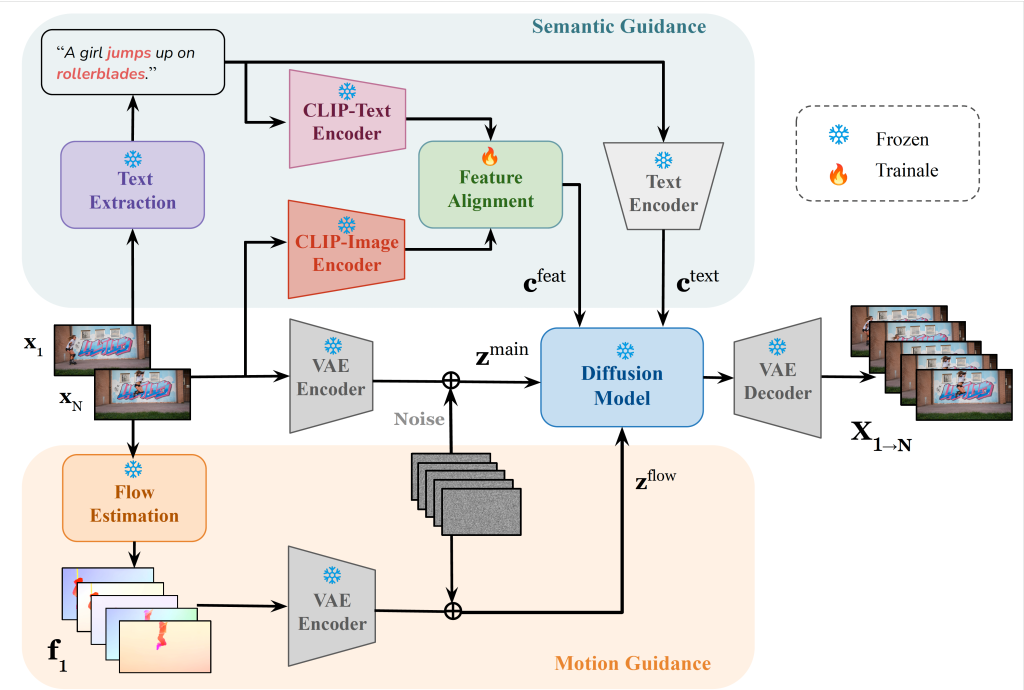

Abstract: Video frame interpolation (VFI) aims to generate intermediate frames between given keyframes to enhance temporal resolution and visual smoothness. While conventional optical flow–based methods and recent generative approaches achieve promising results, they often struggle with large displacements, failing to maintain temporal coherence and semantic consistency. In this work, we propose dual-guided generative frame interpolation (DGFI), a framework that integrates semantic guidance from vision-language models and flow guidance into a pre-trained diffusion-based image-to-video (I2V) generator. Specifically, DGFI extracts textual descriptions and injects multimodal embeddings to capture high-level semantics, while estimated motion guidance provides smooth transitions. Experiments on public datasets demonstrate the effectiveness of our dual-guided method over the state-of-the-art approaches.