The 13th ACM Multimedia Systems Conference (ACM MMSys 2022) Open Dataset and Software (ODS) track

June 14–17, 2022 | Athlone, Ireland

[PDF]

Vignesh V Menon (Alpen-Adria-Universität Klagenfurt), Christian Feldmann (Bitmovin, Klagenfurt), Hadi Amirpour (Alpen-Adria-Universität Klagenfurt),

Mohammad Ghanbari (School of Computer Science and Electronic Engineering, University of Essex, Colchester, UK), and Christian Timmerer (Alpen-Adria-Universität Klagenfurt).

Abstract:

VCA in content-adaptive encoding applications

For online analysis of the video content complexity in live streaming applications, selecting low-complexity features is critical to ensure low-latency video streaming without disruptions. To this light, for each video (segment), two features, i.e., the average texture energy and the average gradient of the texture energy, are determined. A DCT-based energy function is introduced to determine the block-wise texture of each frame. The spatial and temporal features of the video (segment) are derived from the DCT-based energy function. The Video Complexity Analyzer (VCA) project aims to provide an

efficient spatial and temporal complexity analysis of each video (segment) which can be used in various applications to find the optimal encoding decisions. VCA leverages some of the x86 Single Instruction Multiple Data (SIMD) optimizations for Intel CPUs and

multi-threading optimizations to achieve increased performance. VCA is an open-source library published under the GNU GPLv3 license.

GitHub: https://github.com/cd-athena/VCA

Online documentation: https://cd-athena.github.io/VCA/

Applications

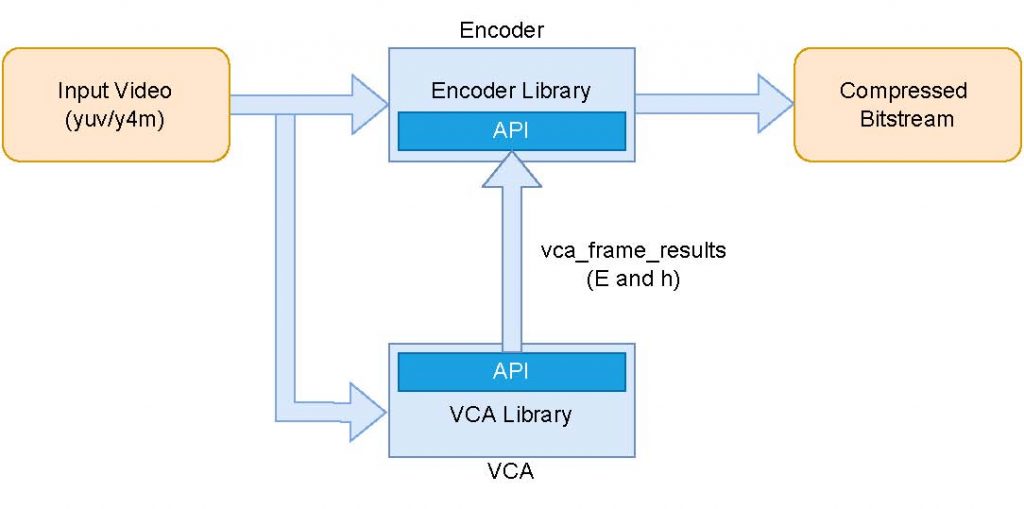

One of the target applications of VCA is content-adaptive encoding where the raw video frames (yuv or y4m) are input to VCA, which analyzes the spatial and temporal characteristics of the video. This analysis is transferred to the encoder via Application Programming Interface (API) to aid the encoding process. Since the analysis provided by VCA is codec-agnostic, encoder implementations of any codec may use the analysis data collected at the rate of 370fps (for 2160p)!

To read about the possible applications of VCA, please read the following blog posts: