X4-MATCH: Sustainable Prediction-based Distribution of Video Encoding on Cloud and Edge

40th IEEE International Parallel & Distributed Processing Symposium

May 25-29, 2026

New Orleans, USA

https://www.ipdps.org/

[PDF]

Samira Afzal (Baylor University), Narges Mehran (University of Salzburg), Andrew C. Freeman (Baylor University), Manuel Hoi (University of Klagenfurt), Armin Lachini (University of Klagenfurt), Radu Prodan (University of Innsbruck), Christian Timmerer (University of Klagenfurt)

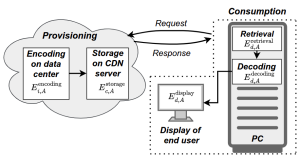

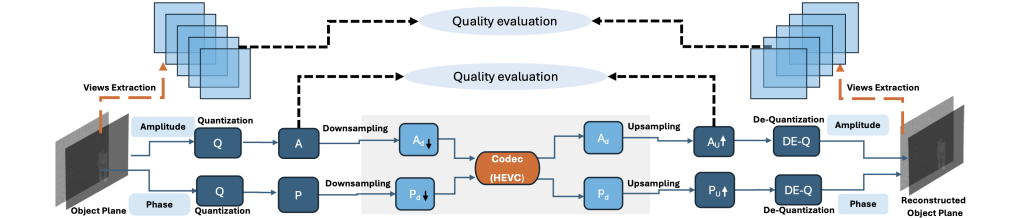

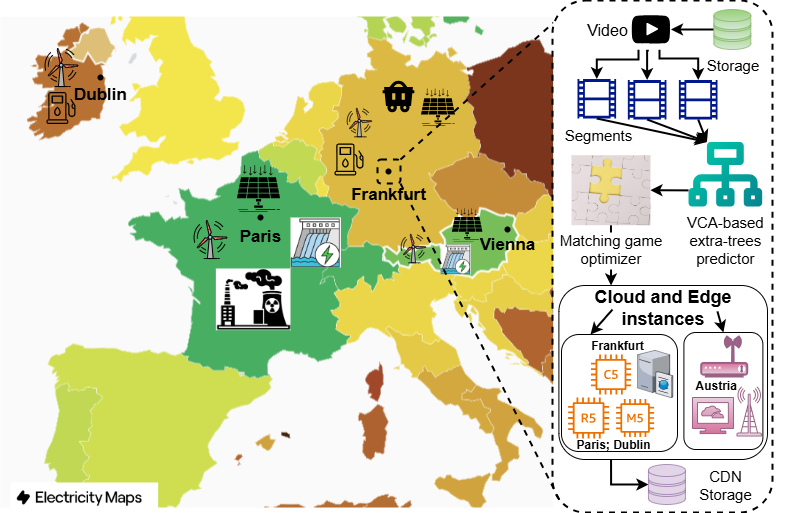

Abstract: The rapid expansion of video traffic has made it one of the most energy-intensive workloads on cloud and edge infrastructures. As encoding remains essential for streaming, gaming, and immersive applications, efficient task scheduling is required to balance service quality, cost efficiency, and sustainability. In this work, we propose a sustainable scheduling framework that integrates machine learning–based performance prediction with game-theoretic matching (X4-MATCH), designed to distribute video encoding workloads across cloud–edge infrastructures. The framework formulates four key performance metrics, including processing and transmission time, price, energy use, and CO2 emissions, as optimization objectives to balance performance and sustainability goals. This method leverages the eXtra-trees regressor model to predict performance metrics for video encoding tasks, integrated with a Matching theory-based resource allocation strategy to efficiently utilize computational resources across cloud and edge computing resources. We experimentally validate the effectiveness of X4-MATCH on a real-world testbed incorporating Amazon Web Services (AWS) cloud virtual machines/instances and local edge servers. Results show that X4-MATCH outperforms state-of-the-art methods by reducing total time by 63.3%, price by 54.2%, and energy by 56.8%.

Index Terms: Video encoding, energy efficiency, cloud and edge, matching theory, extra-trees regressor

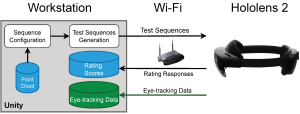

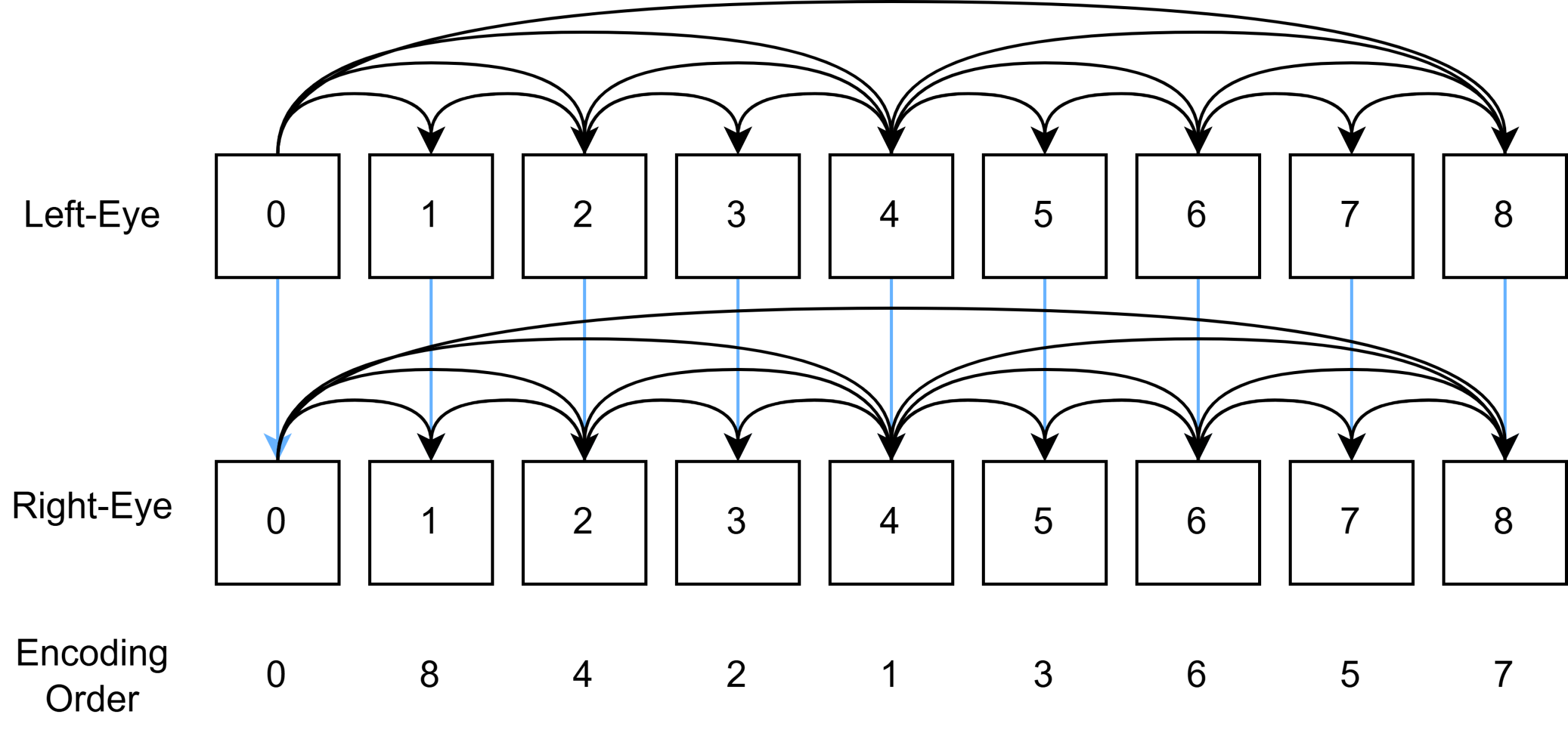

X4-MATCH distribution overview