MPEC2: Multilayer and Pipeline Video Encoding on the Computing Continuum

conference website: IEEE NCA 2022

Samira Afzal (Alpen-Adria-Universität Klagenfurt), Zahra Najafabadi Samani (Alpen-Adria-Universität Klagenfurt), Narges Mehran (Alpen-Adria-Universität Klagenfurt), Christian Timmerer (Alpen-Adria-Universität Klagenfurt), and Radu Prodan (Alpen-Adria-Universität Klagenfurt)

Abstract:

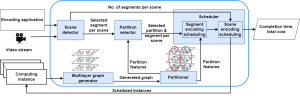

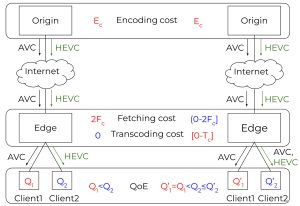

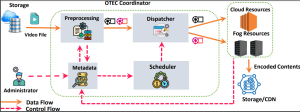

Video streaming is the dominating traffic in today’s data-sharing world. Media service providers stream video content for their viewers, while worldwide users create and distribute videos using mobile or video system applications that significantly increase the traffic share. We propose a multilayer and pipeline encoding on the computing continuum (MPEC2) method that addresses the key technical challenge of high-price and computational complexity of video encoding. MPEC2 splits the video encoding into several tasks scheduled on appropriately selected Cloud and Fog computing instance types that satisfy the media service provider and user priorities in terms of time and cost.

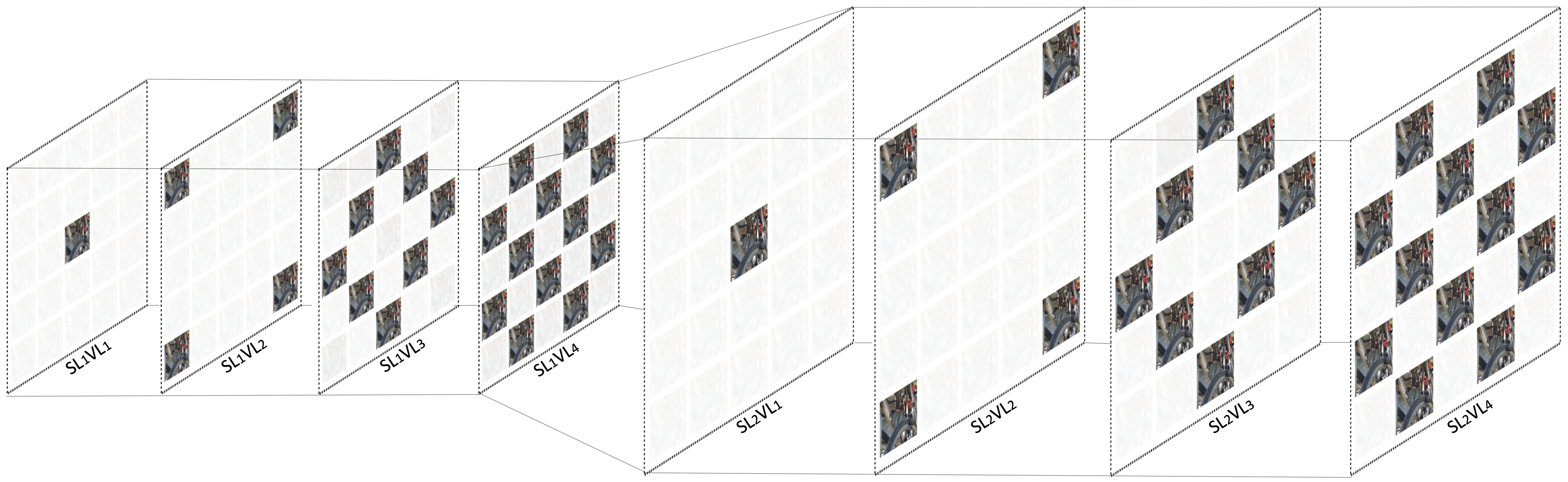

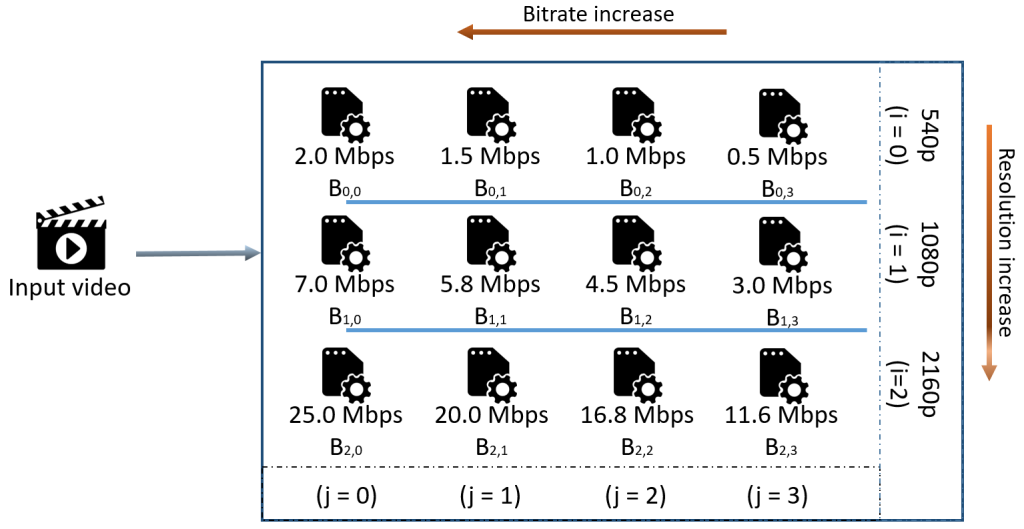

In the first phase, MPEC2 uses a multilayer resource partitioning method to explore the instance types for encoding a video segment. In the second phase, it distributes the independent segment encoding tasks in a pipeline model on the underlying instances.

We evaluate MPEC2 on a federated computing continuum encompassing Amazon Web Services (AWS) EC2 Cloud and Exoscale Fog instances distributed on seven geographical locations. Experimental results show that MPEC2 achieves 24% faster completion time and 60% lower cost for video encoding compared to resource allocation related methods. When compared with baseline methods, MPEC2 yields 40%-50% lower completion time and 5-60% reduced total cost.