Special issue on Open Media Compression: Overview, Design Criteria, and Outlook on Emerging Standards

Proceedings of the IEEE, vol. 109, no. 9, Sept. 2021

By CHRISTIAN TIMMERER, Senior Member IEEE

Guest Editor

MATHIAS WIEN, Member IEEE

Guest Editor

LU YU, Senior Member IEEE

Guest Editor

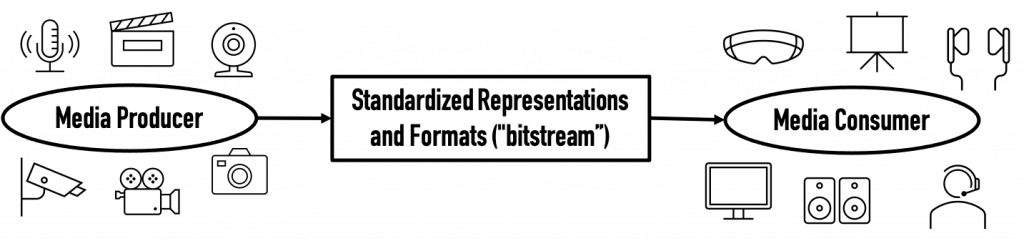

AMY REIBMAN, Fellow IEEE Guest Editor

Abstract: Multimedia content (i.e., video, image, audio) is responsible for the majority of today’s Internet traffic and numbers are expecting to grow beyond 80% in the near future. For more than 30 years, international standards provide tools for interoperability and are both source and sink for challenging research activities in the domain of multimedia compression and system technologies. The goal of this special issue is to review those standards and focus on (i) the technology developed in the context of these standards and (ii) research questions addressing aspects of these standards which are left open for competition by both academia and industry.

Index Terms—Open Media Standards, MPEG, JPEG, JVET,AOM, Computational Complexity

C. Timmerer, M. Wien, L. Yu and A. Reibman, “Special issue on Open Media Compression: Overview, Design Criteria, and Outlook on Emerging Standards,” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1423-1434, Sept. 2021, doi: 10.1109/JPROC.2021.3098048.

A Technical Overview of AV1

J. Han et al., “A Technical Overview of AV1,” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1435-1462, Sept. 2021, doi: 10.1109/JPROC.2021.3058584.

Abstract: The AV1 video compression format is developed by the Alliance for Open Media consortium. It achieves more than a 30% reduction in bit rate compared to its predecessor VP9 for the same decoded video quality. This article provides a technical overview of the AV1 codec design that enables the compression performance gains with considerations for hardware feasibility.

Developments in International Video Coding Standardization After AVC, With an Overview of Versatile Video Coding (VVC)

B. Bross, J. Chen, J. -R. Ohm, G. J. Sullivan and Y. -K. Wang, “Developments in International Video Coding Standardization After AVC, With an Overview of Versatile Video Coding (VVC),” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1463-1493, Sept. 2021, doi: 10.1109/JPROC.2020.3043399.

Abstract: In the last 17 years, since the finalization of the first version of the now-dominant H.264/Moving Picture Experts Group-4 (MPEG-4) Advanced Video Coding (AVC) standard in 2003, two major new generations of video coding standards have been developed. These include the standards known as High Efficiency Video Coding (HEVC) and Versatile Video Coding (VVC). HEVC was finalized in 2013, repeating the ten-year cycle time set by its predecessor and providing about 50% bit-rate reduction over AVC. The cycle was shortened by three years for the VVC project, which was finalized in July 2020, yet again achieving about a 50% bit-rate reduction over its predecessor (HEVC). This article summarizes these developments in video coding standardization after AVC. It especially focuses on providing an overview of the first version of VVC, including comparisons against HEVC. Besides further advances in hybrid video compression, as in previous development cycles, the broad versatility of the application domain that is highlighted in the title of VVC is explained. Included in VVC is the support for a wide range of applications beyond the typical standard- and high-definition camera-captured content codings, including features to support computer-generated/screen content, high dynamic range content, multilayer and multiview coding, and support for immersive media such as 360° video.

Advances in Video Compression System Using Deep Neural Network: A Review and Case Studies

D. Ding, Z. Ma, D. Chen, Q. Chen, Z. Liu and F. Zhu, “Advances in Video Compression System Using Deep Neural Network: A Review and Case Studies,” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1494-1520, Sept. 2021, doi: 10.1109/JPROC.2021.3059994.

Abstract: Significant advances in video compression systems have been made in the past several decades to satisfy the near-exponential growth of Internet-scale video traffic. From the application perspective, we have identified three major functional blocks, including preprocessing, coding, and postprocessing, which have been continuously investigated to maximize the end-user quality of experience (QoE) under a limited bit rate budget. Recently, artificial intelligence (AI)-powered techniques have shown great potential to further increase the efficiency of the aforementioned functional blocks, both individually and jointly. In this article, we review recent technical advances in video compression systems extensively, with an emphasis on deep neural network (DNN)-based approaches, and then present three comprehensive case studies. On preprocessing, we show a switchable texture-based video coding example that leverages DNN-based scene understanding to extract semantic areas for the improvement of a subsequent video coder. On coding, we present an end-to-end neural video coding framework that takes advantage of the stacked DNNs to efficiently and compactly code input raw videos via fully data-driven learning. On postprocessing, we demonstrate two neural adaptive filters to, respectively, facilitate the in-loop and postfiltering for the enhancement of compressed frames. Finally, a companion website hosting the contents developed in this work can be accessed publicly at https://purdueviper.github.io/dnn-coding/.

MPEG Immersive Video Coding Standard

J. M. Boyce et al., “MPEG Immersive Video Coding Standard,” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1521-1536, Sept. 2021, doi: 10.1109/JPROC.2021.3062590.

Abstract: This article introduces the ISO/IEC MPEG Immersive Video (MIV) standard, MPEG-I Part 12, which is undergoing standardization. The draft MIV standard provides support for viewing immersive volumetric content captured by multiple cameras with six degrees of freedom (6DoF) within a viewing space that is determined by the camera arrangement in the capture rig. The bitstream format and decoding processes of the draft specification along with aspects of the Test Model for Immersive Video (TMIV) reference software encoder, decoder, and renderer are described. The use cases, test conditions, quality assessment methods, and experimental results are provided. In the TMIV, multiple texture and geometry views are coded as atlases of patches using a legacy 2-D video codec, while optimizing for bitrate, pixel rate, and quality. The design of the bitstream format and decoder is based on the visual volumetric video-based coding (V3C) and video-based point cloud compression (V-PCC) standard, MPEG-I Part 5.

Compression of Sparse and Dense Dynamic Point Clouds—Methods and Standards

C. Cao, M. Preda, V. Zakharchenko, E. S. Jang and T. Zaharia, “Compression of Sparse and Dense Dynamic Point Clouds—Methods and Standards,” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1537-1558, Sept. 2021, doi: 10.1109/JPROC.2021.3085957.

Abstract: In this article, a survey of the point cloud compression (PCC) methods by organizing them with respect to the data structure, coding representation space, and prediction strategies is presented. Two paramount families of approaches reported in the literature—the projection- and octree-based methods—are proven to be efficient for encoding dense and sparse point clouds, respectively. These approaches are the pillars on which the Moving Picture Experts Group Committee developed two PCC standards published as final international standards in 2020 and early 2021, respectively, under the names: video-based PCC and geometry-based PCC. After surveying the current approaches for PCC, the technologies underlying the two standards are described in detail from an encoder perspective, providing guidance for potential standard implementors. In addition, experiment evaluations in terms of compression performances for both solutions are provided.

JPEG XS—A New Standard for Visually Lossless Low-Latency Lightweight Image Coding

A. Descampe et al., “JPEG XS—A New Standard for Visually Lossless Low-Latency Lightweight Image Coding,” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1559-1577, Sept. 2021, doi: 10.1109/JPROC.2021.3080916.

Abstract: Joint Photographic Experts Group (JPEG) XS is a new International Standard from the JPEG Committee (formally known as ISO/International Electrotechnical Commission (IEC) JTC1/SC29/WG1). It defines an interoperable, visually lossless low-latency lightweight image coding that can be used for mezzanine compression within any AV market. Among the targeted use cases, one can cite video transport over professional video links (serial digital interface (SDI), internet protocol (IP), and Ethernet), real-time video storage, memory buffers, omnidirectional video capture and rendering, and sensor compression (for example, in cameras and the automotive industry). The core coding system is composed of an optional color transform, a wavelet transform, and a novel entropy encoder, processing groups of coefficients by coding their magnitude level and packing the magnitude refinement. Such a design allows for visually transparent quality at moderate compression ratios, scalable end-to-end latency that ranges from less than one line to a maximum of 32 lines of the image, and a low-complexity real-time implementation in application-specific integrated circuit (ASIC), field-programmable gate array (FPGA), central processing unit (CPU), and graphics processing unit (GPU). This article details the key features of this new standard and the profiles and formats that have been defined so far for the various applications. It also gives a technical description of the core coding system. Finally, the latest performance evaluation results of recent implementations of the standard are presented, followed by the current status of the ongoing standardization process and future milestones.

MPEG Standards for Compressed Representation of Immersive Audio

S. R. Quackenbush and J. Herre, “MPEG Standards for Compressed Representation of Immersive Audio,” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1578-1589, Sept. 2021, doi: 10.1109/JPROC.2021.3075390.

Abstract: The term “immersive audio” is frequently used to describe an audio experience that provides the listener the sensation of being fully immersed or “present” in a sound scene. This can be achieved via different presentation modes, such as surround sound (several loudspeakers horizontally arranged around the listener), 3D audio (with loudspeakers at, above, and below listener ear level), and binaural audio to headphones. This article provides an overview of two recent standards that support the bitrate-efficient carriage of high-quality immersive sound. The first is MPEG-H 3D audio, which is a versatile standard that supports multiple immersive sound signal formats (channels, objects, and higher order ambisonics) and is now being adopted in broadcast and streaming applications. The second is MPEG-I immersive audio, an extension of 3D audio, currently under development, which is targeted for virtual and augmented reality applications. This will support rendering of fully user-interactive immersive sound for three degrees of user movement [three degrees of freedom (3DoF)], i.e., yaw, pitch, and roll head movement, and for six degrees of user movement [six degrees of freedom (6DoF)], i.e., 3DoF plus translational x, y, and z user position movements.

An Overview of Omnidirectional MediA Format (OMAF)

M. M. Hannuksela and Y. -K. Wang, “An Overview of Omnidirectional MediA Format (OMAF),” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1590-1606, Sept. 2021, doi: 10.1109/JPROC.2021.3063544.

Abstract: During recent years, there have been product launches and research for enabling immersive audio–visual media experiences. For example, a variety of head-mounted displays and 360° cameras are available in the market. To facilitate interoperability between devices and media system components by different vendors, the Moving Picture Experts Group (MPEG) developed the Omnidirectional MediA Format (OMAF), which is arguably the first virtual reality (VR) system standard. OMAF is a storage and streaming format for omnidirectional media, including 360° video and images, spatial audio, and associated timed text. This article provides a comprehensive overview of OMAF.

An Introduction to MPEG-G: The First Open ISO/IEC Standard for the Compression and Exchange of Genomic Sequencing Data

J. Voges, M. Hernaez, M. Mattavelli and J. Ostermann, “An Introduction to MPEG-G: The First Open ISO/IEC Standard for the Compression and Exchange of Genomic Sequencing Data,” in Proceedings of the IEEE, vol. 109, no. 9, pp. 1607-1622, Sept. 2021, doi: 10.1109/JPROC.2021.3082027.

Abstract: The development and progress of high-throughput sequencing technologies have transformed the sequencing of DNA from a scientific research challenge to practice. With the release of the latest generation of sequencing machines, the cost of sequencing a whole human genome has dropped to less than $ 600. Such achievements open the door to personalized medicine, where it is expected that genomic information of patients will be analyzed as a standard practice. However, the associated costs, related to storing, transmitting, and processing the large volumes of data, are already comparable to the costs of sequencing. To support the design of new and interoperable solutions for the representation, compression, and management of genomic sequencing data, the Moving Picture Experts Group (MPEG) jointly with working group 5 of ISO/TC276 “Biotechnology” has started to produce the ISO/IEC 23092 series, known as MPEG-G. MPEG-G does not only offer higher levels of compression compared with the state of the art but it also provides new functionalities, such as built-in support for random access in the compressed domain, support for data protection mechanisms, flexible storage, and streaming capabilities. MPEG-G only specifies the decoding syntax of compressed bitstreams, as well as a file format and a transport format. This allows for the development of new encoding solutions with higher degrees of optimization while maintaining compatibility with any existing MPEG-G decoder.